Venture Bytes #104: LLM Orchestrators Enhancing AI Models

Lead article in VentureBytes Edition #104 - February 2024

You can download the full 104th edition of Venture Bytes at our website:

Generative AI has become a competitive and operational imperative for businesses, but companies struggle with scale, production, and expertise. Additionally, businesses want to benefit from model-agnostic approaches to get the best of multiple models – an approach that adds another layer of complexity. Large language models (LLM) orchestration is the answer.

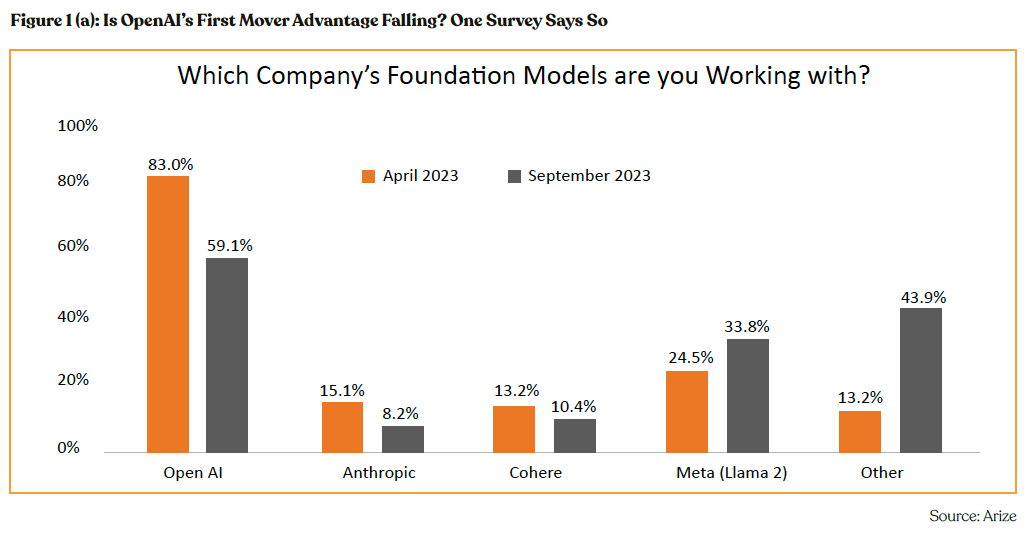

LLMs are becoming increasingly commoditized with over 30 LLMs now in the arena including open-source models. Market dynamics have dramatically shifted, as evidenced by an Arize survey indicating OpenAI’s market share in LLM adoption plummeting from 83% in April 2023 to just 13% six months later, amidst rising alternatives. This market realignment underscores the burgeoning competition, and shifts focus beyond model capabilities to the robustness of the infrastructure that supports them.

LLM orchestration is essentially the process of managing and controlling LLMs to maximize their performance and impact. Organizations are grappling with the complexities of effectively leveraging LLMs, which involves selecting optimal models, unifying various LLMs into integrated services, and deploying applications in a cost-efficient manner. Addressing these challenges and improving AI workflows requires combining several AI models, algorithms, and tools into a single framework. Orchestration startups span a broad spectrum, offering services from model refinement to application rollout and computational acceleration. AI Orchestration Market size is likely to reach $35.2 billion in 2030 from $6.9 billion in 2022, g 2.5 % CAGR, per SNS Insider.

AI Infrastructure Opportunity Is Enormous

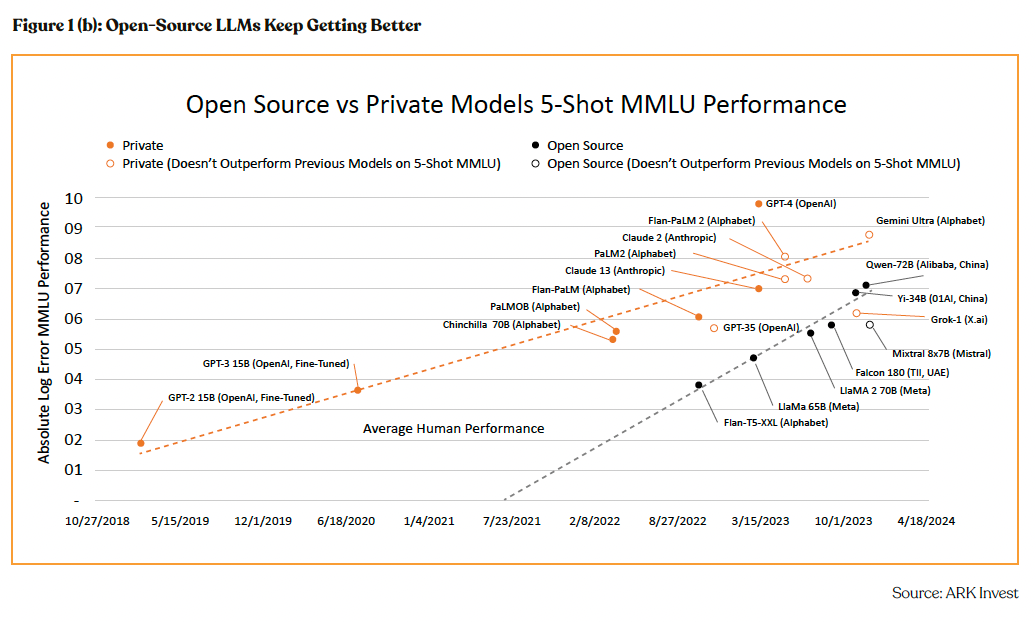

The future of AI promises complex applications, and the bedrock for this progress is a robust infrastructure, which stands as a vast and vital opportunity. The trajectory for AI applications leans heavily towards leveraging fine-tuned foundation models, tailored through additional data or parameter adjustments for specific use cases. This shift emphasizes the infrastructure’s role over mere model capabilities, highlighting a burgeoning market for AI infrastructure that is rapidly expanding and becoming increasingly complex.

The majority of enterprises prefer to operate their LLMs over external API providers, driven by considerations of cost, latency, and data privacy. For example, OpenAI’s pricing model requires 12 cents for every 1000 tokens (around 700 words) for a fine- tuned model on Davinci. Conversely, by leveraging a combination of HuggingFace, DeepSpeed, and Ray, companies can build their own fine-tuning and serving systems for LLMs. This approach not only proves to be cost-efficient—under $7 for tuning a 6 billion parameter model in just 40 minutes—but also addresses the speed bottleneck. External LLMs like GPT-3.5 can take up to 30 seconds per query, which is impractical for many applications. By internalizing the process, companies can significantly enhance performance, often achieving latency improvements of 5x or more, tailored specifically to their application needs.

Promising Startups in LLM Orchestration

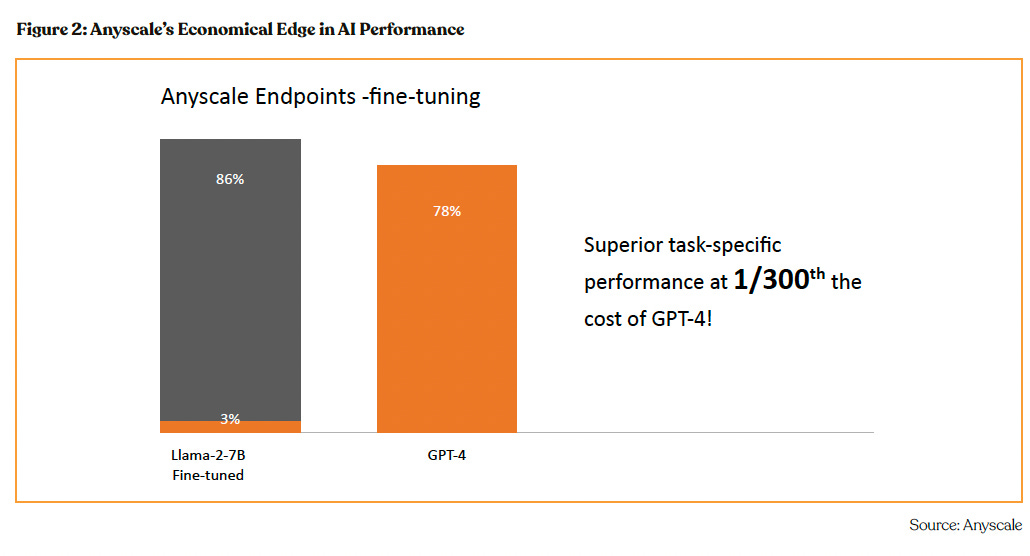

Startups such as Anyscale, Run.ai, and Pinecone are making significant strides in the LLM orchestration area. California-based Anyscale, which was valued at $1 billion in its $199 million Series C round, eases the scaling of AI applications through its Ray framework. Leading AI organizations such as OpenAI, Cohere and EleutherAI use Anyscale’s Ray to train LLMs at scale. With Anyscale’s fine-tuning capabilities, the Llama-2-7B model has demonstrated task-specific performances reaching 86%, eclipsing G 4’s 78% and doing so at a fraction of the cost—merely 1/300th.

Run:ai another Series C company, assists organizations in advancing their AI projects economically by virtualizing high-cost hardware resources. This enables the pooling, sharing, and strategic allocation of these resources. The company provides an array of solutions, including GPU optimization, cluster management, and the orchestration of artificial intelligence and machine learning workflows.

Pinecone, which raised a $100 million Series B round in April 2023 at a $750 million valuation, also holds a crucial role in facilitating large-scale similarity searches. This capability is key for LLMs that rely on contextual and semantic understanding for information retrieval. Pinecone’s offerings enhance LLMs’ ability to process and analyze extensive data sets efficiently and accurately.**

Disclaimer